HPC Newsletter 03/16

Dear colleagues,

The bwForCluster NEMO is now available for users from the fields of Elementary Particle Physics, Neuroscience and Microsystems Engineering and to shareholders who have made additional investments. The hardware is complete and has been verified to comply with the specifications. The operating software is currently under construction and we are building up the application software stack. However, for early adopters, you can already begin to hand in a Rechenvorhaben (short "RV", English: planned compute activities). Please see below for details. Once the RV has been validated, you and your co-workers can login and explore the system. Alternatively, you can wait for the switch to production mode, which is planned to happen on September 1st.

We would like to thank everybody who attended and contributed to the NEMO user assembly and the inauguration event on July 14th. The user assembly gave us very valuable feedback and the inauguration event had a significant public relations impact for NEMO and the bwHPC initiative. We hope you enjoyed both events as much as we did.

Photo Gallery of inauguration ceremony and first NEMO user assembly

Your HPC Team, Rechenzentrum, Universität Freiburg

Table of Contents

Upcoming events and important dates

bwForCluster NEMO and bwForCluster BinAC inauguration

bwForCluster NEMO ramp up phase

End of Life for Test Cluster NEMO

Entitlement and Rechenvorhaben

AlsaCalcul GPU Programming Challenge: And the Winner is ... Freiburg

Upcoming events and important dates

31.08.2016: Testcluster NEMO End of Life

31.08.2016: ANSYS Software Licence on bwUniCluster expires

15-16.09.2016: 45th SPEEDUP Workshop on High-Performance Computing in Basel

29.09.2016: Joint ViCE/CITAR Workshop with focus on use cases for Virtual Research Environments

12.10.2016: 3rd bwHPC-Symposium in Heidelberg (limited number of participants, please register early)

13-14.10.2016: ZKI Arbeitskreis Supercomputing

For a list of upcoming course opportunities, please see http://www.bwhpc-c5.de/en/course_opportunities.php

NEMO First User Assembly

On July 14th, the first NEMO user assembly took place. The date was chosen to give NEMO users from the state of Baden-Württemberg the chance to attend both user assembly and inauguration ceremony with a single business trip. The large seminar room in the Rechenzentrum in Freiburg was well filled with about 50 scientists from the respective communities present. The HPC team gave a presentation on NEMO with background information on the underlying ideas for its operating model. Discussions cycled around important issues of governance and fair use of resources. The term "Rechenvorhaben" needed particular explanation. There was a general consensus that "Rechenvorhaben" will initially be used for fairshare queuing and that workgroup/project leaders should be responsible for applying for new "Rechenvorhaben".

The first NEMO user assembly was a very pleasant experience and the HPC team wishes to thank all attendants.

NEMO presentation by the HPC team

bwForCluster NEMO and bwForCluster BinAC inauguration

On July 14th, the inauguration ceremony for the bwForCluster BinAC (Tübingen) and bwForCluster NEMO (Freiburg) was held at the Rechenzentrum in Freiburg. During the press conference, questions were answered by representatives of the two universities and the Ministry of Science, Research and the Arts Baden-Württemberg. Additionally, two workgroup leaders from NEMO's scientific communities, Prof. Dr. Markus Schumacher (Physics) and Prof. Dr. Stefan Rotter (Neuroscience) were present to answer the most pressing question the general public supposedly has: "Why do scientists need this big thing and what will they do with it?"

After the press conference, NEMO and BinAC were jointly started with a "push of the magic button" by Theresia Bauer, minister for Science, Research and the Arts Baden-Württemberg, Prof. Dr. Dr. h.c. Hans-Jochen Schiewer, rector of the University of Freiburg, Prof. Dr. Peter Grathwohl, pro-rector for research of the University of Tübingen and Prof. Dr. Thomas Walter (Tübingen) and Prof. Dr. Gerhard Schneider (Freiburg), directors of the computing centers in Tübingen and Freiburg.

The public part of the inauguration took place in the Otto-Krayer-Haus of the University of Freiburg. During the first part of the event, about 100 attendants from the various scientific fields and from universities in Baden-Württemberg listened to short talks given by the the aforementioned representatives on the importance of the federate bwHPC approach and on the important milestone that was reached with all four bwForClusters and the bwUniCluster now operative.

The mandatory coffee break was coupled with a poster session where scientists could present results obtained by using the existing bwClusters or their planned activities on the new bwForClusters. Members of the bwHPC-C5 federate user support project used the opportunity to discuss HPC computing activities with current and prospective users.

The coffee break was followed up by a vendor-specific technology session. DALCO, the company that built the bwForCluster NEMO, hosted a talk by Hans Pabst from Intel with the title "Intel Xeon Phi x200 (Knights Landing): Performance Impressions and Best Practices". To contrast the "Many Integrated Cores" (MIC) approach for boosting HPC performance, the second technical talk was given by Ralph Hinsche from Nvidia, titled "Tesla P100 GPU and DGX-1 Deep Learning Appliances". This talk, referencing the "general-purpose computing on graphics processing units" (GPGPU) approach for boosting HPC performance, was hosted by Megware, the company that built the bwForCluster BinAC.

The inauguration event was completed with an informal get-together at the Rechenzentrum and the possibility for all participants to experience the bwForCluster NEMO live and in stereo.

The event was covered in the media, e.g. SWR-Landesschau (video), heise online, Badische Zeitung, Schwäbisches Tagblatt and Baden-FM (audio).

bwForCluster NEMO ramp up phase

Since the delivery of the first hardware, the HPC-Team has been working on getting the bwForCluster NEMO ready for the ENM community. The system has since been verified to fulfill the given specifications. The infrastructure servers and the provisioning system are in place, although there are still some small details to iron out.

Please take a look at the updated schedule for the ramp-up phase:

- 01.08.2016: Start Alpha Phase (done)

- Configuration phase I, no stable operation expected

- Expect frequent short downtimes and killed jobs

- No support guaranteed, but feedback is appreciated

- Recommended for early adopters (we will contact you)

- 15.08.2016: Start Beta Phase (in progess)

- Configuration phase II, semi-stable operation

- Expect occasional short downtimes and killed jobs

- No support guaranteed, but feedback is appreciated

- Recommended for experienced HPC users

- 01.09.2016: Production mode

- Stable operation

- Downtimes for maintenance with prior announcement

- Full support via enm-support@hpc.uni-freiburg.de

- Recommended for aspiring HPC users

End of Life for Test Cluster NEMO

The end of life date for the old test cluster has been moved to 31.08.2016. You will still be able to access your home directory after that date until the end of September, but you can no longer start compute jobs, since the compute nodes will be powered off. The new bwForCluster NEMO is much more powerful and power efficient. Furthermore, the HPC-Team would like to focus its operating efforts and support for users exclusively on the new hardware.

For users from our designated scientific communities (Elementary Particle Physics, Neuroscience and Microsystems Engineering) and NEMO shareholders the operating mode on the bwForCluster NEMO is the same as on the old test cluster NEMO. The only notable changes are that you have to be associated with a Rechenvorhaben (see below) and that you start with a clean home directory, so you have to copy the relevant data (if any) from the test cluster NEMO yourself. Please note that home directories have a quota of 100 Gigabyte and that for optimal performance or large amounts of data, you are advised to allocate work spaces on the parallel file system.

For users who have used the NEMO test cluster but do not belong to the ENM communities, we would like to point out that you can use compute resources on the bwUniCluster without any scientific application process and irrespective of your scientific domain. You only need the bwUniCluster entitlement. Details on how to receive this entitlement is specific to the university you belong to. Complementary to this general available HPC resource, you might be eligible to use one of NEMO's sibling bwForClusters in Mannheim/Heidelberg, Ulm or Tübingen. The uniform procedure for getting resources to any bwForCluster is to apply for a Rechenvorhaben. If your scientific domain is covered by one of the mentioned other bwForClusters, you will be granted resources there.

Entitlement and Rechenvorhaben

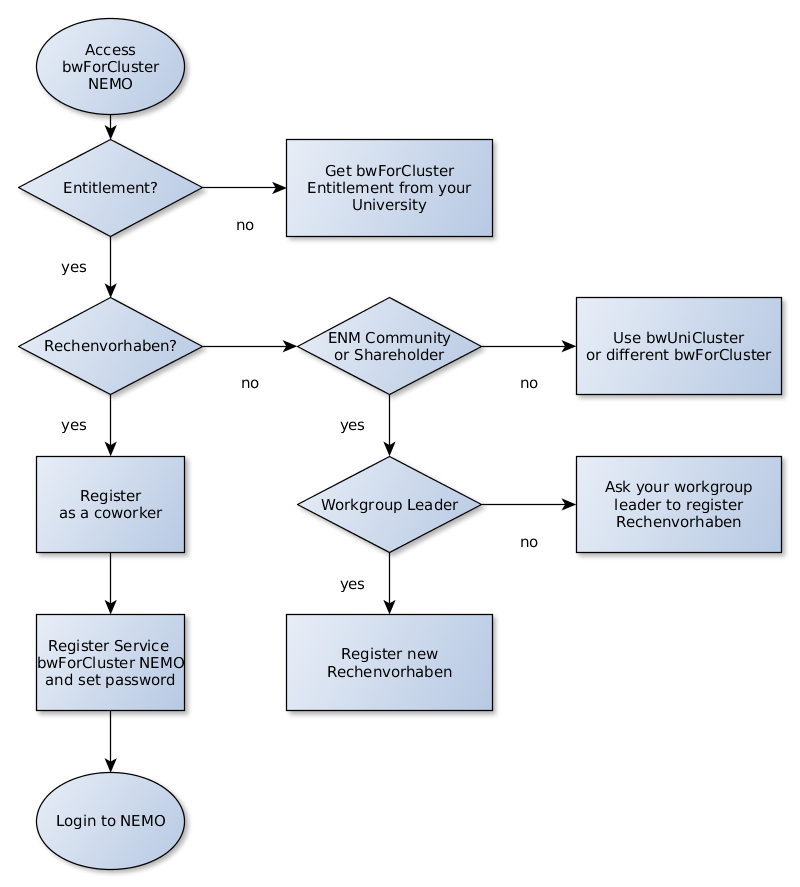

With the bwForCluster NEMO soon entering production mode, we would ask scientists to start the migration process from the old test cluster. To be granted access to the bwForCluster NEMO, there are two prerequisites that need both be met. They do not depend on one another, so they can be processed in parallel.

The first prerequisite is to get the bwForCluster entitlement. The procedure is slightly different for each university, but in general straightforward. Users from the Freiburg university will get the combined bwUniCluster/bwForCluster entitlement by filling out this web form. Users from other universities should consult the bwHPC Wiki. Each scientist can do this independently from his responsible scientific supervisor. Please note that while the bwForCluster entitlement is a necessary requirement, it is not sufficient to be granted access to the bwForCluster NEMO or any other bwForCluster.

The second prerequisite is getting associated with a Rechenvorhaben (i.e. planned compute activities). If a Rechenvorhaben from your work group or project leader has already been approved, then please ask your work group leader for the RV ID and the RV password. Both are necessary to associate yourself with a Rechenvorhaben and gain access to NEMO.

In case there is no Rechenvorhaben for your work group yet, please ask your work group leader to create a request for a new Rechenvorhaben using the web form, giving a description of the planned compute activities, the scientific field and an estimate on the resources needed. In general, only Rechenvorhaben from NEMO shareholders and Rechenvorhaben from the scientific domains of Elementary Particle Physics, Neurosience and Microsystems Engineering will be accepted for the bwForCluster NEMO. Rechenvorhaben not meeting these requirements will be routed to one of the other bwForClusters or the bwUniCluster.

For shareholders and work group leaders who have contributed to the grant application for the bwForCluster NEMO, the description of the Rechenvorhaben can be kept short by either mentioning the contribution to the NEMO grant application or the status of a shareholder. Optionally, an updated short description can be submitted, as well.

The distribution of shared HPC resources at this scale needs a certain amount of governance and bureaucratic processes. We have tried to make the procedure as simple as possible, and we hope it is still significantly easier than writing a full application for the higher tier supercomputers in Baden-Württemberg.

AlsaCalcul GPU Programming Challenge: And the Winner is ... Freiburg

The AlsaCalcul GPU Programming Challenge took place from June 14th to June 16 in Strasbourg. The workshop and the following contest were designed to help users to migrate existing applications to platforms equipped with GPU accelerators and to optimize already existing GPU-aware applications for more powerful and recent GPU systems.

Florian Sittel, a PhD student from the work group of Prof. Dr. Gerhard Stock for Biomolecular Dynamics took part in the competition and won the 1st price, a Telsa K40 from Nvidia.

Quoting from his report of the event (in German):

"In unserer Arbeitsgruppe untersuchen wir das Verhalten von biologischen Molekülen wie z.B. Proteinen. Ein Effekt, der auch im menschlichen Körper eine große Reihe von Abläufen steuert, ist die sog. 'Allosterie', bei der z.B. an einem Teil des Proteins ein kleines Molekül andockt und dadurch an einem anderen Teil eine Funktion aktiviert oder inaktiviert wird. Dieser Vorgang lässt sich simulieren, aber nur sehr schwer in der resultierenden großen Datenmenge beobachten.

Konkret habe ich ein Programm geschrieben, dass aufbauend auf statistischen Größen ("Transferentropie, Kullback-Leibler-Divergenz & Mutual Information") versucht, diesen Informationsfluss innerhalb des Moleküls zu beschreiben.

Bei unserer typischen Datenmenge würde dies für eine einzelne Simulation auf einem Core-i7 mit sechs Kernen und Hyperthreading unter Vollast Tage bis teilweise sogar Wochen dauern (bei bereits optimiertem Parallelismus mit OpenMP). Mit einer Tesla K40 lässt sich dieselbe Rechnung in Minuten bis Stunden rechnen. Mit der Portierung auf dem AlsaCalcul-Workshop und nachfolgenden Optimierungen im GPU-Code ist der Speedup ca. ein Faktor 50."

Congratulations to Florian on his accomplishment.

Publications

Please inform us about any scientific publication and any published work that was achieved by using bwHPC resources (bwUniCluster, bwForCluster NEMO, bwForCluster BinAC, bwForCluster JUSTUS or bwForCluster MLS&WISO). An informal E-Mail to publications@bwhpc-c5.de is all it takes. Thank you!

Your publication will be referenced on the bwHPC-C5 website:

http://www.bwhpc-c5.de/en/user_publications.php

We would like to stress that it is in our mutual interest to promote all accomplishments which have been made using bwHPC resources. We are required to report to the funding agencies during and at the end of the funding period. For these reports, scientific publications are the most important success indicators. Further funding will therefore strongly depend on both quantity and quality of said publications.

HPC Team, Rechenzentrum, Universität Freiburg

http://www.hpc.uni-freiburg.de

bwHPC initiative and bwHPC-C5 project

http://www.bwhpc.de

To subscribe to our mailinglist, please send an e-mail to to hpc-news-subscribe@hpc.uni-freiburg.de

If you would like to unsubscribe, please send an e-mail to hpc-news-unsubscribe@hpc.uni-freiburg.de

Previous newsletters: http://www.hpc.uni-freiburg.de/news/newsletters

For questions and support, please use our support address enm-support@hpc.uni-freiburg.de